OpenAI API, Assistants API, and GPTs

Posted on 2024/01/12 by GoAPI

With the launch of GPT Store on January 10th 2024, the public's interest in GPTs, Assistant API, and ChatGPTs are again surging. Since we at GoAPI have developed the unofficial GPTs API for developers, we are seeing a large number of questions from confused users on the differences between the various OpenAI products/services. Therefore, we have written this blog to help clarifying some of the common confusions out there.

ChatGPT

This is the popular chatbot developed by OpenAI. Powered by sophisticated Large Language Models (LLMs) such as GPT-3.5 and GPT-4, the chatbot experienced the fastest user growth in modern software history, assisting users worldwide with all types of daily-life-tasks.

This was both historical and fantastic. But if you are developer, and if you want to incorporate these LLM models in your own applications, what can you do? Enter OpenAI API.

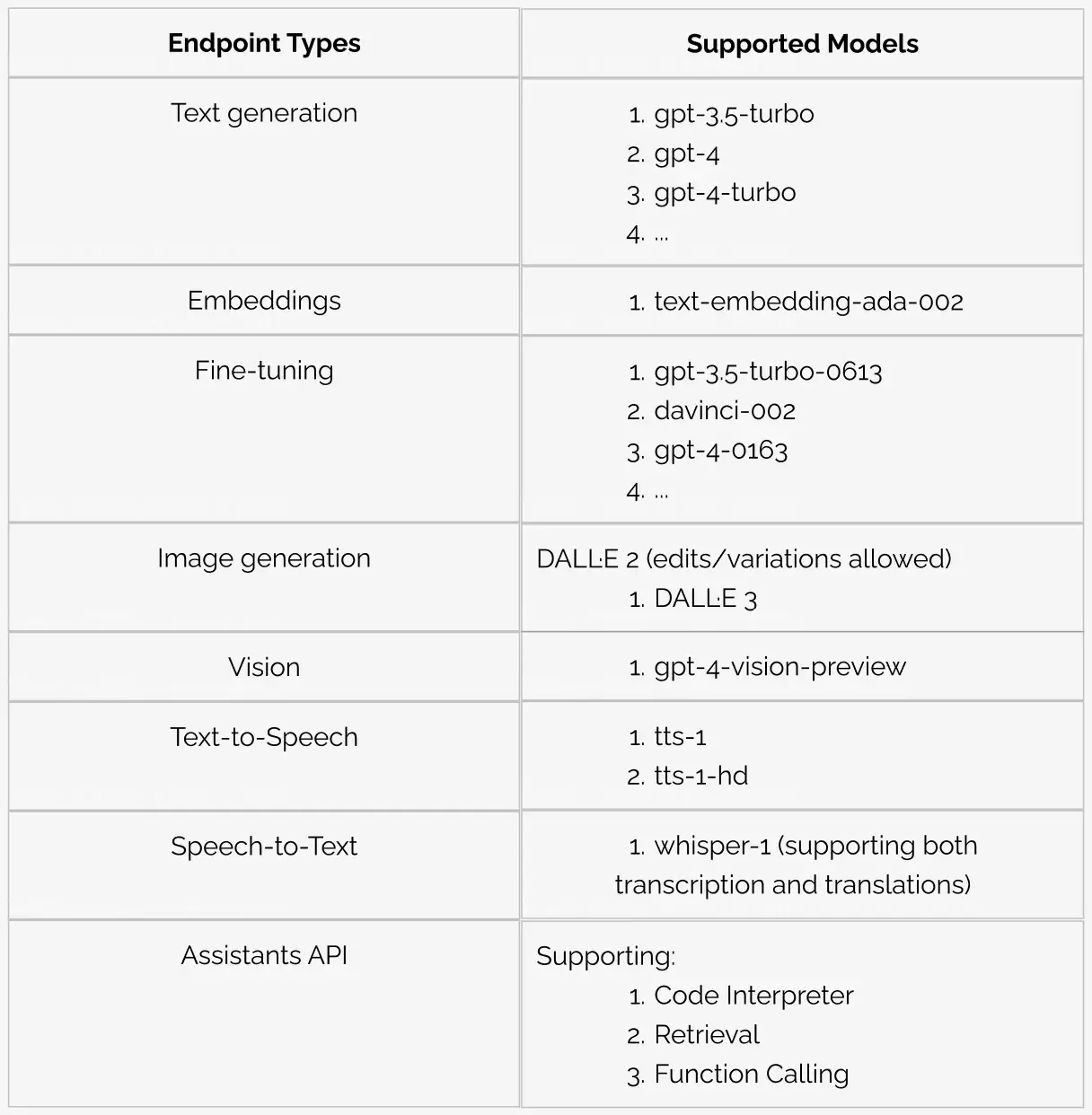

Assistants API

As the table above shows, Assistant API is part of the OpenAI API family that allows end-users to access LLM models with some useful tools for their tasks. Currently, Code Interpreter, Retrieval, and Function Calling are three tools being supported.

Plugins/Tools

A short note on plugins - plugins are the tools from ChatGPT to expand the LLM's capabilities. Given how useful and powerful LLMs already are, it is easy to imagine plugins can really help explore further use-cases for the models. External developers from Zapier, Shopify, Wolfram and more have developed their respective plugins, and there are also plugins from ChatGPT. Code Interpreter, Retrieval, and Function Calling are those plugins.

Code Interpreter

According to OpenAI, Code Interpreter provides the LLM models with “a working Python interpreter in a sandboxed, fire-walled execution environment, along with some ephemeral disk space”. This means that a plethora of python libraries will be at your disposal when calling the Code Interpreter plugin, some potential use cases include:

Solving math problems

Data analysis and visualisation

File format conversions

Retrieval

Function Calling

It is only natural that you want to connect LLMs to other external tools. In order to do this, you need get structured data from the LLMs, and that is what functional calling does. It allows you to describe functions to the Assistant and have it return the functions that need to be called along with their arguments.

GPTs

And now, we move to the latest exciting news from OpenAI: GPTs! They allow end-users with ChatGPT Plus Subscriptions to create their own version of ChatGPTs with no code, have them rank on GPT Store’s leaderboard, and any other users (with Plus Subscriptions) will be able to use these GPTs.

GPTs and GPT Store together effectively build an ecosystem of user and builders (blurring the distinction between the two as well), increasing usefulness of the underlying LLMs, and attracting new users to become ChatGPT Plus Subscribers, thereby increasing revenue for OpenAI.

However, if more users end up becoming ChatGPT Plus Subscribers, that means they are less likely to pay for your app. That is a very big problem for you as a developer.

GPTs API (by GoAPI)

As you can probably guess, OpenAI is not going to release GPTs API like they did with OpenAI API, because one of the main goals for GPTs & GPT Store is to attract users into the ChatGPT ecosystem, and an API is directly against that goal.

That is why we at GoAPI developed the unofficial GPTs API, allowing the users of your apps to access the ecosystem of powerful GPTs without need a ChatGPT Plus Account (i.e. without your users stop paying you but start paying OpenAI)

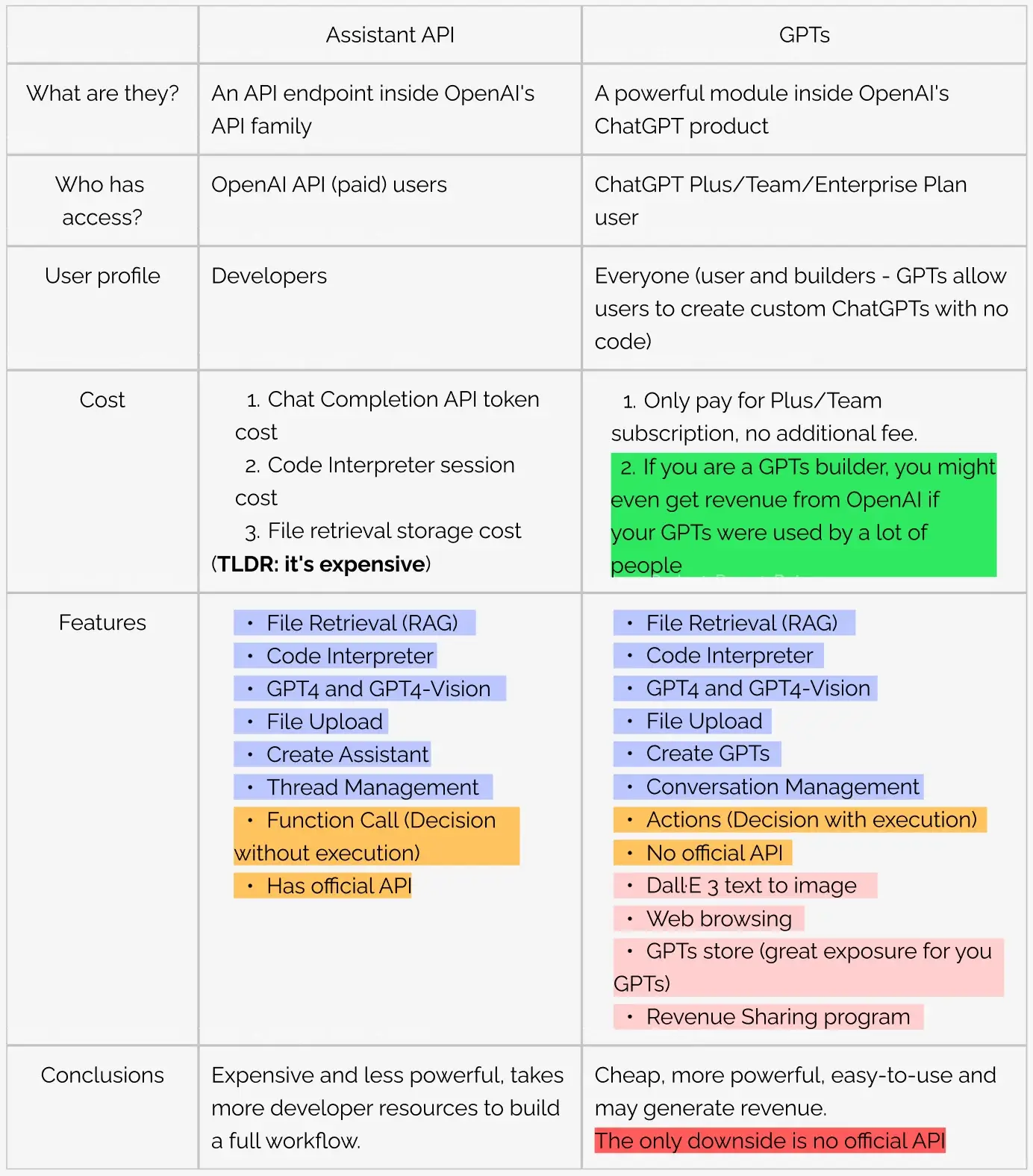

Some developers are asking what the differences are between the Assistants API and the GPTs. Given the long-winded information above that you would have to read to answer this question, we prepare a table below to help you quickly get the idea:

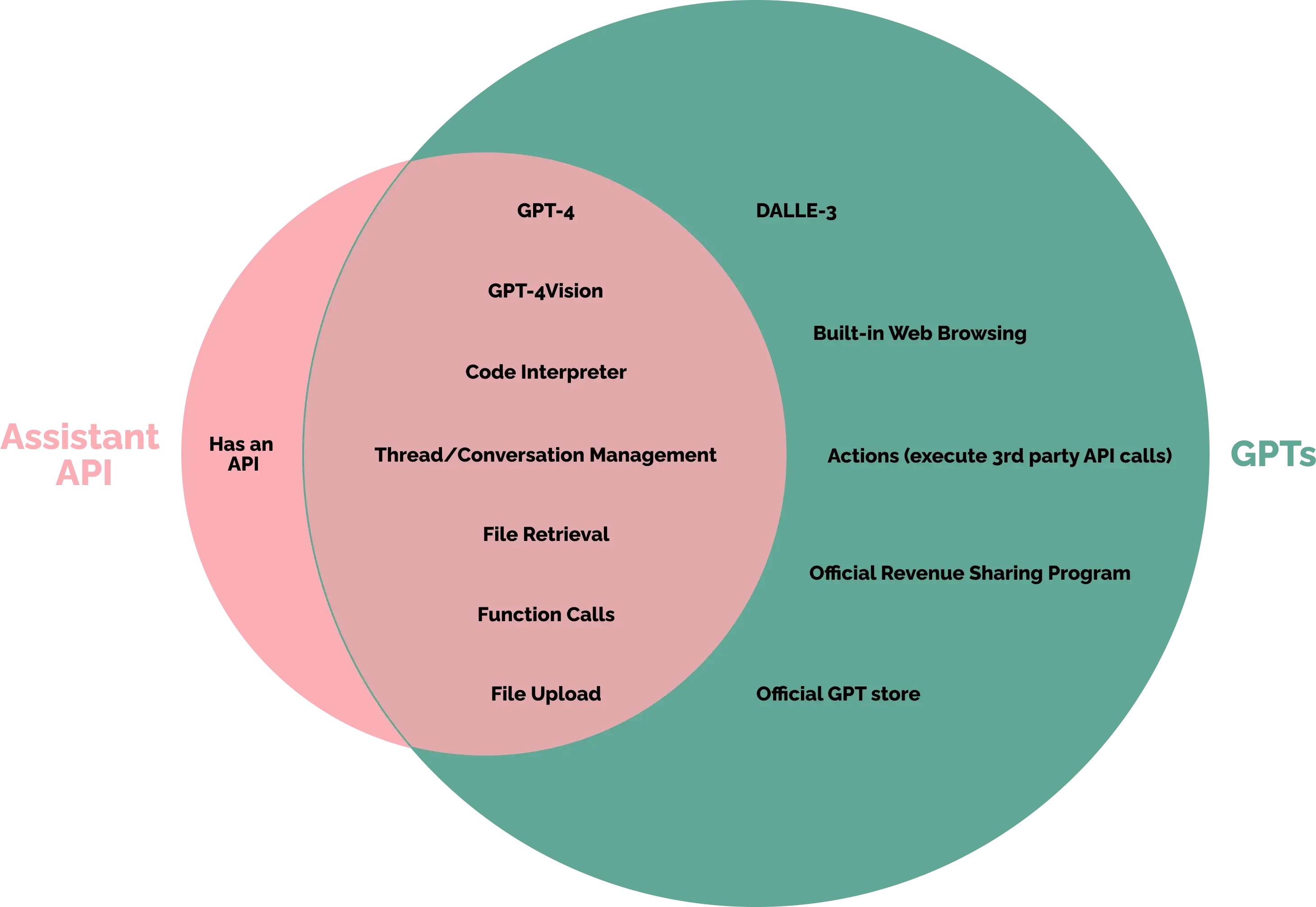

And if we summarise the table above into an illustration, it would look like below:

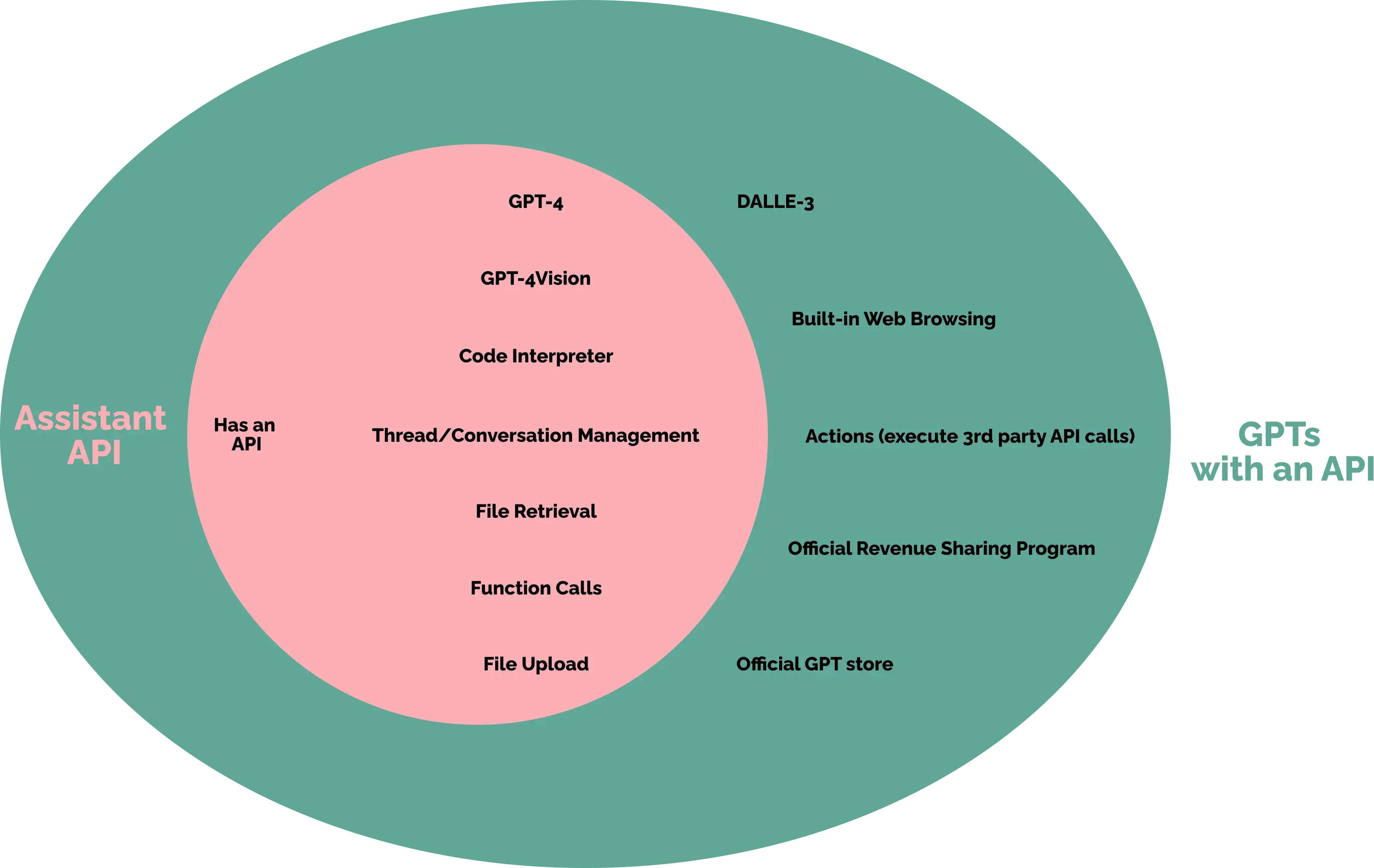

And with GPTs API from GoAPI, your users can now access your GPTs or any public GPTs, so the illustration would change to the following:

You can see that GPTs (with an API) will out perform Assistants API at any level.

Happy coding guys! :)